This is a guest post from Christian Michel, Team Lead System Monitoring at Sensu partner Becon.

Network monitoring at scale is an age-old problem in IT. In this post, I’ll discuss a brief history of network monitoring tools — including the pain points of legacy technology when it came to monitoring thousands of devices — and share my modern-day solution using Sensu Go and Ansible.

Photo by Anastasia Dulgier on Unsplash

Then: Nagios and multiple network monitoring tools

I’ve spent the last ten years as a consultant for open source monitoring architectures. During this time, I’ve seen many companies, of every size, based all over the world, with very different approaches to implementing and migrating monitoring environments and tools. Especially in small and medium-sized businesses, the demand for a one-fits-all solution is high. While big companies often use more than one monitoring solution — the “best of breed” approach — this option is often untenable for smaller businesses due to financial constraints. And sometimes having multiple monitoring tools makes no sense at all — the more tools you have, the more things you have to keep track of. Many IT organizations feel the best approach is to use one solution to monitor their entire infrastructure. This monolithic approach, with one tool that offers many functionalities, like monitoring, root cause analysis, visualization, reporting, etc., is not wrong as such, but when there are requirements like the need to scale, true interoperability in big environments, and reducing the dependency on one tool and vendor, it’s better to separate these requirements and run a single interconnected solution following the microservices approach.

My decade of experience has made me very familiar with Nagios, and I found it usually was very expensive to implement as an appropriate network monitoring solution — for example, creating all required checks for all network devices required operators to script the queries, as there was no API at that time. And since every single check for every single instance had to be scheduled and triggered (even though almost all checks are the same), the CPU workload on the Nagios backend was huge. Think about an environment with 10,000 network devices and 5 different checks — Nagios would have to schedule and trigger 50,000 checks. Sure, with this approach one has the ability to define scheduling in a highly granular fashion, but in reality every similar check would be in demand at the same interval (for example, all ICMP checks every one minute and all disk checks every five minutes). Therefore it was not uncommon to have a system with 32 CPU cores in a medium-sized environment, which was very expensive a decade ago.

Although Nagios 4 offers several community extensions to improve performance, retrospectively it feels like simply implementing workarounds to push the architectural limits of Nagios.

Now: workflow automation for monitoring with Sensu Go

Years later came Sensu, which considerably reduced the load of monitoring with its pub/sub design, and the recent release of Sensu Go has increased the performance and simplicity of the solution even more. And, while you can easily monitor all kinds of servers, virtual machines and containers with Sensu Go, it’s just as easy to monitor all your network devices, too!

In this day and age there is no alternative but to use tools for automation in your IT infrastructure. Since Sensu Go is API-driven, you can easily use configuration management tools like Ansible to automate your monitoring configuration and its contents.

When making minor, ad-hoc changes, I use sensuctl to configure Sensu Go. With initial imports or mass modifications, I use Ansible. But as I was not able to write Ansible modules and I didn’t want to write Ansible tasks with the “command” module just wrapping sensuctl commands, I decided to talk directly to the sensu-backend API with the Ansible module URI. (Later I noticed that there already is an awesome Sensu Go Ansible role from Sensu Community member Jared Ledvina.)

In mid 2018 Sensu Go was in early Beta; without full documentation on the API at that stage, I decided to automate API commands in Ansible. Using Wireshark to capture the communications between sensuctl and the Sensu backend, I was able to learn which API commands are used. After a few minutes, I had all the necessary commands and tasks recorded in my Ansible roles. From this I derived my method of monitoring thousands of network devices with Sensu Go in a couple of minutes.

In my fresh new Sensu Go environment I have some simple network checks defined. I mainly use the Nagios plugin check_nwc_health because it supports SNMP versions 1, 2c, and 3, as well as many different network devices out of the box.

The Sensu Go configuration of the proxy entity representing the network device and the check definition looks like so for each of the following:

Entity:

{

"entity_class": "proxy",

"system": {

"network": {

"interfaces": null

}

},

"subscriptions": [

""

],

"last_seen": 0,

"deregister": false,

"deregistration": {},

"metadata": {

"name": "network-device-9000",

"namespace": "default",

"labels": {

"device_model": "model3",

"device_type": "router",

"device_vendor": "bestVendor",

"ip_address": "10.10.10.10",

"snmp_community": "public",

"snmp_environment": "1",

"snmp_interfaces": "1",

"team": "networkteam1"

}

}

}

Check:

{

"command": "/usr/lib/nagios/plugins/check_nwc_health --hostname --community --mode interface-health --multiline --morphperfdata ' '=''",

"handlers": [],

"high_flap_threshold": 0,

"interval": 60,

"low_flap_threshold": 0,

"publish": true,

"runtime_assets": [],

"subscriptions": [

"snmp_poller"

],

"proxy_entity_name": "",

"check_hooks": null,

"stdin": false,

"subdue": null,

"ttl": 0,

"timeout": 10,

"proxy_requests": {

"entity_attributes": [

"entity.entity_class == 'proxy' && entity.labels.snmp_interfaces == '1'"

],

"output_metric_format": "nagios_perfdata",

"output_metric_handlers": [

"influxdb"

],

"env_vars": null,

"metadata": {

"name": "snmp_interface-health",

"namespace": "default"

}

}

Sample output:

/usr/lib/nagios/plugins/check_nwc_health --hostname 10.10.10.10 --community public --mode interface-health --multiline --morphperfdata ' '=''

OK - lo is up/up

interface lo usage is in:0.00% (0.00bit/s) out:0.00% (0.00bit/s)

interface lo errors in:0.00/s out:0.00/s

interface lo discards in:0.00/s out:0.00/s

interface lo broadcast in:0.00% out:0.00%

ixp0 is up/up

interface ixp0 usage is in:2.13% (212775.67bit/s) out:6.02% (602186.17bit/s)

interface ixp0 errors in:0.00/s out:0.00/s

interface ixp0 discards in:0.00/s out:0.00/s

interface ixp0 broadcast in:0.01% out:0.00%

ipsec0 is up/up

interface ipsec0 usage is in:1.18% (118178.33bit/s) out:1.48% (147544.00bit/s)

interface ipsec0 errors in:0.00/s out:0.00/s

interface ipsec0 discards in:0.00/s out:0.00/s

interface ipsec0 broadcast in:0.00% out:0.00% | 'lo_usage_in'=0%;80;90;0;100 'lo_usage_out'=0%;80;90;0;100 'lo_traffic_in'=0;8000000;9000000;0;10000000 'lo_traffic_out'=0;8000000;9000000;0;10000000 'lo_errors_in'=0;1;10;; 'lo_errors_out'=0;1;10;; 'lo_discards_in'=0;1;10;; 'lo_discards_out'=0;1;10;; 'lo_broadcast_in'=0%;10;20;0;100 'lo_broadcast_out'=0%;10;20;0;100 'ixp0_usage_in'=2.13%;80;90;0;100 'ixp0_usage_out'=6.02%;80;90;0;100 'ixp0_traffic_in'=212775.67;8000000;9000000;0;10000000 'ixp0_traffic_out'=602186.17;8000000;9000000;0;10000000 'ixp0_errors_in'=0;1;10;; 'ixp0_errors_out'=0;1;10;; 'ixp0_discards_in'=0;1;10;; 'ixp0_discards_out'=0;1;10;; 'ixp0_broadcast_in'=0.01%;10;20;0;100 'ixp0_broadcast_out'=0%;10;20;0;100 'ipsec0_usage_in'=1.18%;80;90;0;100 'ipsec0_usage_out'=1.48%;80;90;0;100 'ipsec0_traffic_in'=118178.33;8000000;9000000;0;10000000 'ipsec0_traffic_out'=147544;8000000;9000000;0;10000000 'ipsec0_errors_in'=0;1;10;; 'ipsec0_errors_out'=0;1;10;; 'ipsec0_discards_in'=0;1;10;; 'ipsec0_discards_out'=0;1;10;; 'ipsec0_broadcast_in'=0%;10;20;0;100 'ipsec0_broadcast_out'=0%;10;20;0;100

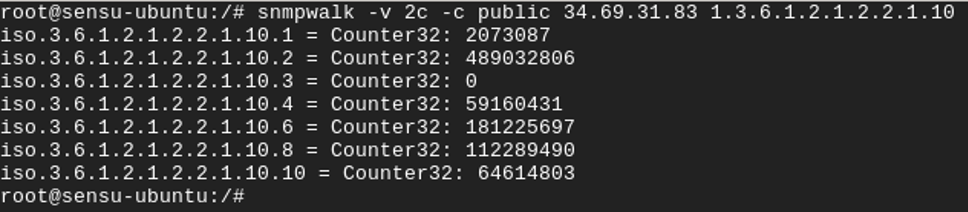

This check_nwc_health plugin gathers all necessary information like usage in percentage, traffic in bits per second, errors, discards, and broadcast packets for incoming and outgoing packages from all interfaces of the device — automatically. That is very helpful, as it’s not necessary to create – in that example – 50 single SNMP checks. All information comes within one check. And with the awesome implementation of supporting the Nagios plugin output format in Sensu Go, it ships all these metrics to InfluxDB, from which Grafana is able to visualize the data.

In order to avoid the need to create all network devices as proxy entities manually, I use the information in a configuration management database (CMDB) to create Ansible template files, including all necessary information for my monitoring — one for every type of network device. By having all network entities as Ansible template files, the Ansible task of the role iterates over the template files and creates one proxy entity per device.

Once I had the Ansible template files set up, it only took me a couple of minutes to set up monitoring for thousands of network devices using Ansible and Sensu Go.

Some usage notes

The check_nwc_health plugin automatically calculates deltas and bandwidth utilizations using the difference between two polled values. However, it uses TMP files for this purpose, which means that you should avoid using the round-robin feature in Sensu (which spreads the proxy queries across multiple agents) since this plugin will require both queries to the same network device from the same proxy agent.

You should assign a number of network devices to each proxy agent and if you require HA, then you can set up a Sensu proxy agent in an N+1 HA mode (one spare Sensu agent in case another agent fails). In order to use round-robin, you’ll need to synchronize the TMP files across the different agents on different hosts but we need to check that the TMP file naming convention allows an agent running on another host to use the same TMP file.

Closing thoughts

I’ve found the combination of Sensu Go, Ansible, and check_nwc_health to be very powerful for monitoring a large number of network devices.

The check_nwc_health plugin is very flexible and is available on GitHub, where it also lists all the command line options supported. A small sample of the options/modes available are:

--mode

A keyword which tells the plugin what to do

- hardware-health (Check the status of environmental equipment (fans, temperatures, power))

- cpu-load (Check the CPU load of the device)

- memory-usage (Check the memory usage of the device)

- disk-usage (Check the disk usage of the device)

- interface-usage (Check the utilization of interfaces)

- interface-errors (Check the error-rate of interfaces (without discards))

- pool-connections (Check the number of connections of a load balancer pool)

- chassis-hardware-health (Check the status of stacked switches and chassis, count modules and ports)

- accesspoint-status (Check the status of access points)

(etc)

As an example:

check_nwc_health --hostname 10.0.12.114 --mode hardware-health --community public --verbose

Thanks for reading! If you’re in Europe and would like help with your monitoring setup, feel free to reach out to me: christian.michel@becon.de.